What you don’t know about the REPORTING process in Facebook

At Facebook we maintain a robust infrastructure that empowers our more than 900 million person community to help us enforce our policies by using the report links found throughout the site. While it is unlikely that you will have any problems with content on the site, it might not always be clear what happens once you do decide to click “Report.” Today, we are excited to publish a guide that will give the people who use Facebook more insight into our reporting process.

There are dedicated teams throughout Facebook working 24 hours a day, seven days a week to handle the reports made to Facebook. Hundreds of Facebook employees are in offices throughout the world to ensure that a team of Facebookers is handling reports at all times. For instance, when the User Operations team in Menlo Park is finishing up for the day, their counterparts in Hyderabad are just beginning theirwork keeping our site and users safe. And don’t forget, with users all over the world, Facebook handles reports in over 24 languages. Structuring the teams in this manner allows us to maintain constant coverage of our support queues for all our users, no matter where they are.

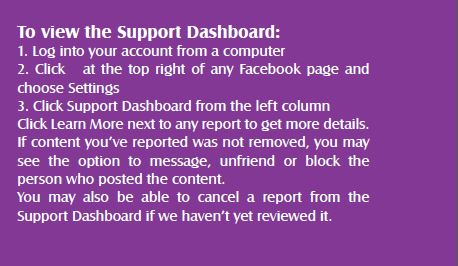

In order to effectively review reports, User Operations (UO) is separated into four specific teams that review certain report types – the Safety team, the Hate and Harassment team, the Access team, and the Abusive Content team. When a person reports a piece of content, depending on the reason for their report, it will go to one of these teams. For example, if you are reporting content that you believe contains graphic violence, the Safety Team will review and assess the report. And don’t forget, the Support Dashboard , which will allow you to keep track of some these reports.

If one of these teams determines that a reported piece of content violates our policies or our Statement of Rights and Responsibilities, we will remove it and warn the person who posted it. In addition, we may also revoke a user’s ability to share particular types of content or use certain features, disable a user’s account, or if need be, refer issues to law enforcement.

We also have special teams just to handle user appeals for the instances when we might have made a mistake.

Content that violates our Community Standards is removed. However there are situations in which something does not violate our terms, but the person still may want it removed. In the past, people reporting such content would see no action when it didn’t violate our terms. Starting last year, we launched systems to allow people to directly engage with one another to better resolve their issues beyond simply blocking or unfriending another user. Of particular note, is our social reporting tool that allows people to reach out to other users or trusted friends to help resolve the conflict or open a dialog about a piece of content.

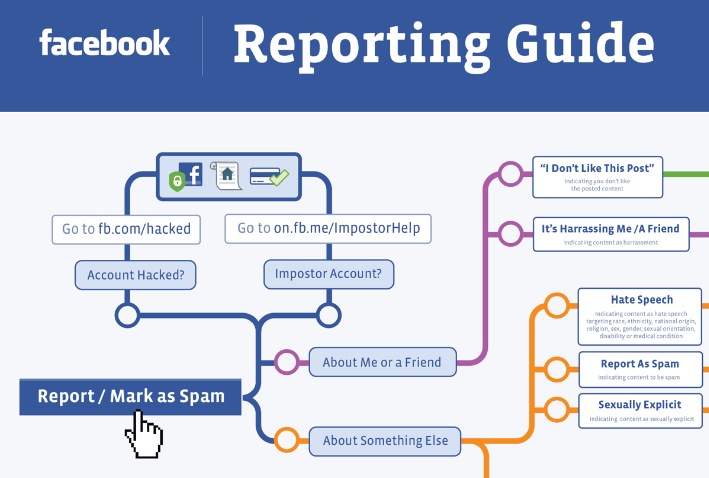

It is not only our User Operations Team that provides support to the people who use our service but also our engineers who build tools and flows to help you deal with common problems and help you get back into your account faster. On some rare occasions, users may lose access to their accounts by forgetting their password, losing access to their email or by having their account compromised, to help these people we have built extensive online checkpoints to help these people regain access. By using online checkpoints we can authenticate your identity both securely and quickly, this means there’s no need to wait to exchange emails with a Facebook representative before you can restore access to your account or receive a new password. Be sure to visit www.facebook.com/hacked if you believe your account has been compromised or use the Report links to let us know about an impostor Timeline.

And it is not only the people who work at Facebook that focus on keeping our users safe, we also work closely with a wide variety of experts and outside groups. Because, even though we like to think of ourselves as pros at building great social products that let you share with your friends, we partner with outside experts to ensure the best possible support for our users regardless of their issue. These partnerships include our Safety Advisory Board that helps advise us on keeping our users safe to the National CyberSecurity Alliance that helps us educate people on keeping their data and accounts secure. Beyond our education and advisory partners we lean on theexpertise and resources of over 20 suicide prevention agencies throughout the world including Lifeline (US/Australia), the Samaritans (UK/Hong Kong), Facebook’s Network of Support for LGBT users, and our newest partner AASARA in India to provide assistance to those who reach out for help on our site.

The safety and security of the people who use our site is of paramount importance to everyone here at Facebook. We are all working tirelessly at iterating our reporting system to provide the best possible support for the people who use our site. While the complexity of our system may be bewildering we hope that this note

and infographic have increased your understanding of our processes. And, even though we hope you don’t ever need to report content on Facebook, you will now know exactly what happened to that report and how it was routed.

What is the Support Dashboard?

The Support Dashboard helps you track the status of the reports you make to Facebook (ex: sensitive content, fake profiles). From this page you’ll be able to see when we take action on a report and what decision we’ve made.

Note: Only you can see your support dashboard.

About The Author

Joe Sullivan, Former Chief Security Officer at Facebook